By guest author: William H. Shapiro, AuD, CCC-A – Lester S. Miller, Jr and Kathleen V. Miller Clinical Assistant Professor of Hearing Health, Clinical Associate Professor in Otolaryngology, Supervising Audiologist, NYU Cochlear Implant Center

Artificial intelligence (AI) continues to change the way we live our lives. Gone are the days of traveling across country or even the city with a paper map in hand. Now we only need to tell our smartphones where we want to go and the friendly AI-powered avatar tells us when to turn and how long it will take to arrive at our destination. The same is soon to be true in our work lives as well.

As Brian Kaplan noted in a previous ProNews Blog, artificial intelligence has changed the way medical professionals are working and how they arrive at decisions; the same is true for hearing healthcare. However, the change is proving to be much slower than in other sectors of healthcare.

In 2005, Robert Margolis recounted the history of diagnostic tests in audiology and the how the profession has not kept pace with advanced technology. He quotes James Jerger, who in 1963, stated that most all of the routine audiometric tests conducted could be done by a machine while the audiologists would be consumed with analyzing and interpreting the results. Margolis summarized by stating, “My opinion is that if our profession does not embrace automated testing and drive the process of progress, other professions will do it for us.”1 Over a decade has passed since Margolis’ comments and automated audiometry is still not the standard of care for audiometrics but progress has been made in hearing aid technology.

In 2004, Oticon announced the first use of artificial intelligence in a decision-making feature that compared the outcomes of particular feature combinations to provide the optimal voice-to-noise ratio at any given minute2. This is an example of rapid comparison technology that provides options without taking advantage of a closed-loop machine learning technique. Fourteen years later in April 2018, Widex introduced the first hearing aid which uses input from the patient via a smartphone app combined with real-life listening situations to employ machine learning to optimize the users hearing experience. Widex reports that the machine learning moves beyond simple decision-making to assist the individual by using data from around the world to benefit all Widex Evoke™ users through big data analysis3. This step in technological advancement encourages audiology to take advantage of more sophisticated artificial intelligence systems to better serve the patients who seek our help.

In a recent study at the University of Hong Kong and Ann & Robert Lurie Children’s Hospital of Chicago, AI has been employed in a novel way to predict language development in deaf children using cochlear implants. A machine-learning algorithm used pre-cochlear implant surgery MRI scans to detect abnormal brain development with a high degree of accuracy, specificity and sensitivity. This provided clinicians with information about how to personalize post-surgery habilitation efforts to help those children develop language skills4. AI provides promise in helping predict outcomes according to this study and AI can also be used to standardize and streamline cochlear implant fittings.

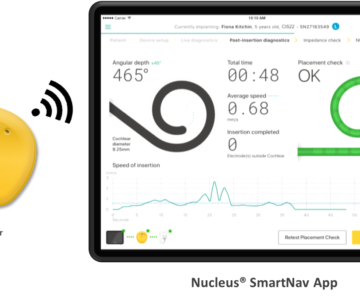

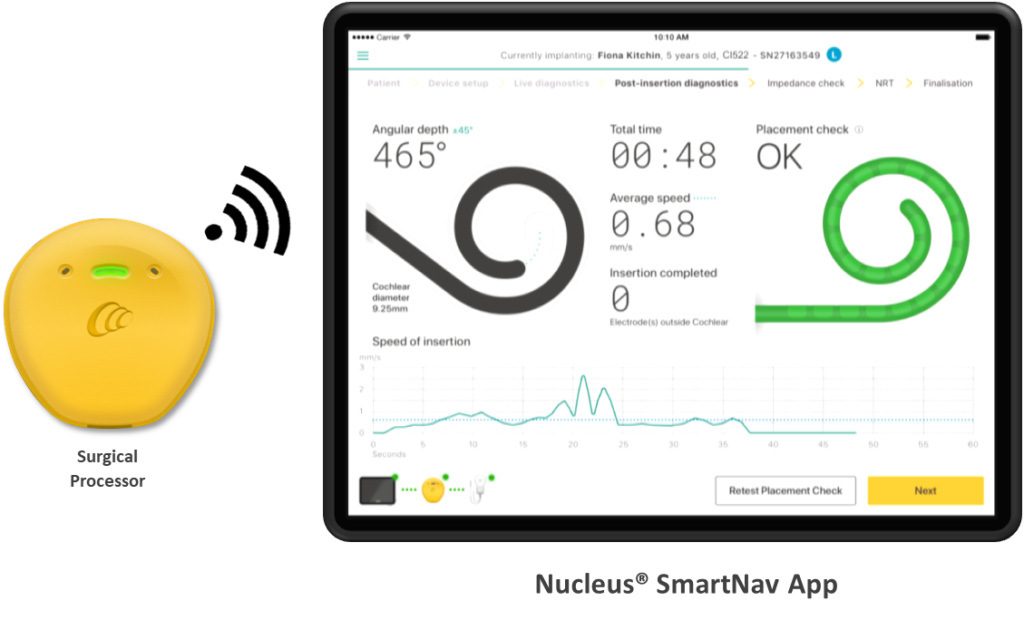

Currently, a clinical study in the United States employs an artificial intelligence engine to help standardize cochlear implant programming through a direct-connect system. Cochlear has licensed technology from Prof. Paul Govaerts of Belgium to evaluate recipient outcomes and clinician and recipient acceptance of a new AI technology. The AI system utilizes a closed-loop system of evaluation measures to provide outcome-driven fitting recommendations based on the test measures in a standardized approach to post-operative cochlear implant programming. The software test module may allow for improved clinician and recipient experience by allowing the testing to be delivered directly to the recipient’s sound processor outside of a test booth. The AI system provides several options to potentially improve outcomes, based on data across major cochlear implant systems and individual psychoacoustic test measures. These AI recommendations come from comparisons to millions of MAPs and parameter settings which could substantially increase the chances of optimized outcomes while reducing programming time for the recipient and the clinician. Additionally, this evidence-based programming method could provide consistency in programming, outcomes and experience for the recipient whether they see a novice or experienced clinicians.

Hearing aid fittings have used real-ear targets for decades, now we are studying the AI technology to develop target-based fittings in cochlear implants as well. This exciting step into advanced AI use can help move our profession to provide the evidence needed to convince audiologists to try another tool in the toolbox of CI fittings. We are not looking to have an AI-assisted avatar tell us how to program, but as our industry grows and provides services to more patients, we do need to continue to standardize the way we provide cochlear implant technology so that more patients obtain optimal results.

See the references below for more information.

About out guest author:

William H. Shapiro received his Master of Arts degree in Audiology from Queens College of the CUNY in 1978. He received his Doctorate of Audiology from Arizona School of Health Sciences, A.T. Still University in 2007. He has worked at New York University since 1984 where he has been involved with all aspects of diagnostic and rehabilitative audiology, including the dispensing of assistive hearing technology (hearing aids, FM systems). He currently holds the title, Lester S. Miller, Jr.& Kathleen V. Miller Clinical Assistant Professor of Hearing Health, in the Department of Otolaryngology, is Director of FGP Audiology, and is Supervising Audiologist at the New York University Cochlear Implant Center, a nationally recognized center of excellence in the field of cochlear implants. Dr Shapiro has several publications in peer reviewed journals as well as book chapters. He has presented at conventions, both nationally, as well as internationally. He serves on the advisory board of two cochlear implant manufacturers. He is the 2008 recipient of the “Outstanding Clinician Award” received from the New York State Speech, Language and Hearing Association.

- Margolis, R (2005) “Automated Audiometry: Progress or Pariah?” whitepaper prepared for AudiologyOnline.

- Schum, D. (June 2004) “Artificial Intelligence: The New Advanced Technology in Hearing Aids”, AudiologyOnline, https://www.audiologyonline.com/articles/artificial-intelligence-new-advanced-technology-1082.

- April 2018, “Widex Launches the World’s First Machine Learning Hearing Aids”, press release, https://global.widex.com/en/news/widex-launches-worlds-first-machine-learning-hearing-aid-evoke.

- Ann & Robert H. Lurie Children’s Hospital of Chicago. (2018, January 15). Brain imaging predicts language learning in deaf children. ScienceDaily. Retrieved August 6, 2018 from sciencedaily.com/releases/2018/01/180115151559.htm